Abrupt skill emergence in Large Language Models

This post assumes you’re familiar with the Neural Scaling Laws we discussed last week. If you’re not, read more here:

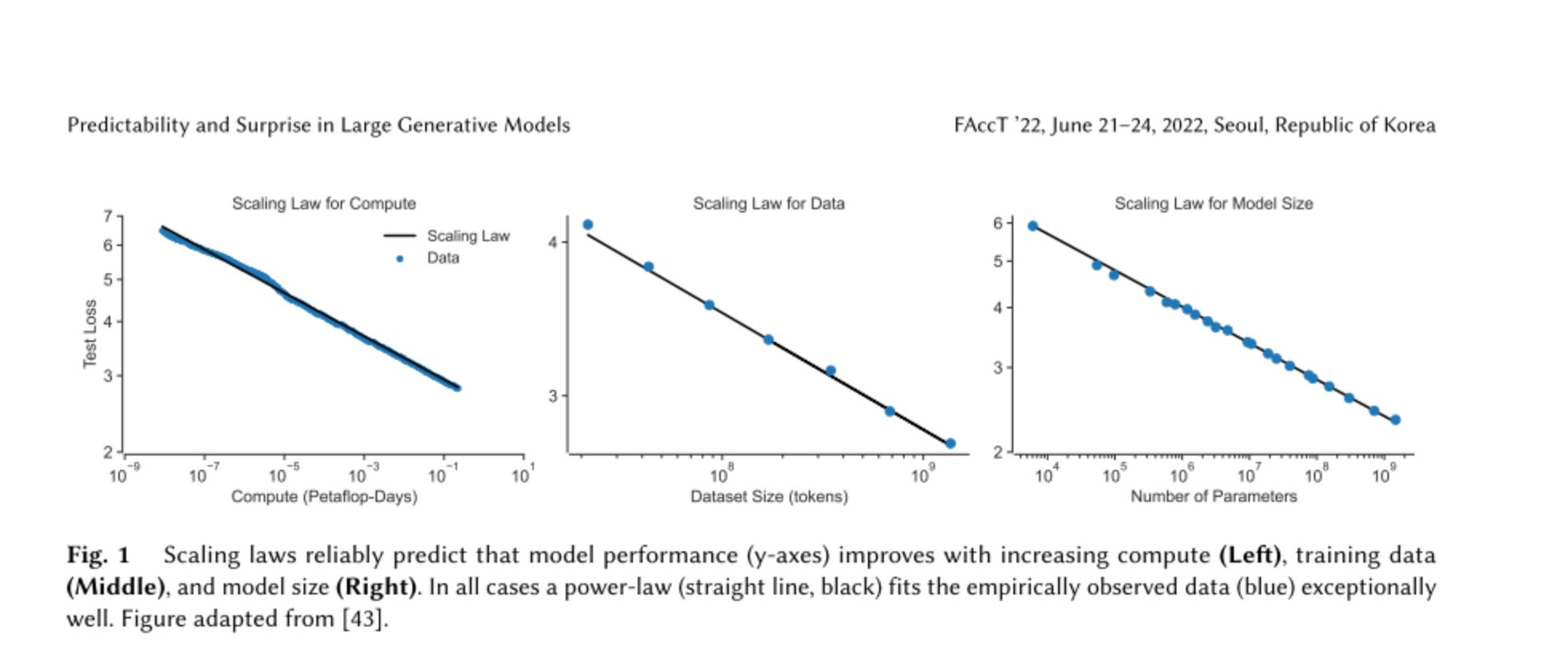

We can predict general Large Language Model performance as a function of compute, dataset size, and parameter count.

However, if we shift our focus from general performance (next-token prediction) to specific tasks like adding numbers or writing code, the picture changes.

We do not have scaling laws for task-specific performance. Instead, graphs of specific model capabilities versus parameter count show abrupt, emergent jumps in proficiency.1

For example, below 1010 parameters, GPT-3 fails to add two 3-digit numbers.

Yet the moment GPT-3 grows to 1010 parameters, accuracy jumps from 0% to 20%, and upon growing to 1011 parameters, the model can add numbers 80% of the time.

Other model skills exhibit similar abruptness. Consider the “Word unscramble” task, which is what you call getting the model to play Scrabble if you’re looking to publish.

I give the model the prompt:

The word hte is a scrambled version of the English word _

And the model — if it’s capable — says “the.”

However, models aren’t capable Word Unscramblers at any FLOP count below ~1022. This is the critical threshold, which is like the critical threshold for melting ice — 32 degrees Fahrenheit. If you try to melt an ice cube by heating it from 20 degrees Fahrenheit to 25 degrees, you might as well do nothing at all.

Similarly, to increase Word Unscramble performance, we need to add compute until we hit ~1022 FLOPs, and once we do, models — suddenly and unpredictably — begin learning how to unscramble words.2

These abrupt jumps in capability are not unique to Word Unscramble. Look at the Persian QA graph above. At 1018 FLOPs, the model doesn’t speak Persian. At 1020 FLOPs, it still doesn’t speak Persian. But at ~1024 FLOPs, it speaks Persian.3

What other capabilities might emerge as we train larger models? PhD-level cancer research abilities? Superhuman manipulation? Given the increasingly large compute budgets for training models — Inflection recently announced their intention to build one of the world’s largest clusters with 22,000 Nvidia H100s — we’re about to find out.

In most cases. OpenAI was able to predict specific coding capabilities for GPT-4. “In addition to predicting final loss, we developed methodology to predict more interpretable metrics of capability. One such metric is pass rate on the HumanEval dataset [43], which measures the ability to synthesize Python functions of varying complexity. We successfully predicted the pass rate on a subset of the HumanEval dataset by extrapolating from models trained with at most 1, 000× less compute (Figure 2).”

This is specific to the model architectures and datasets in the paper. It isn’t known whether learning Word Unscramble at this FLOP count is a general feature of transformers.

You might be wondering why I claim the model doesn’t speak Persian at lower FLOPs even though it gets 25% on the task. 25% is no better than random guessing since the model must choose the correct answer from four choices.